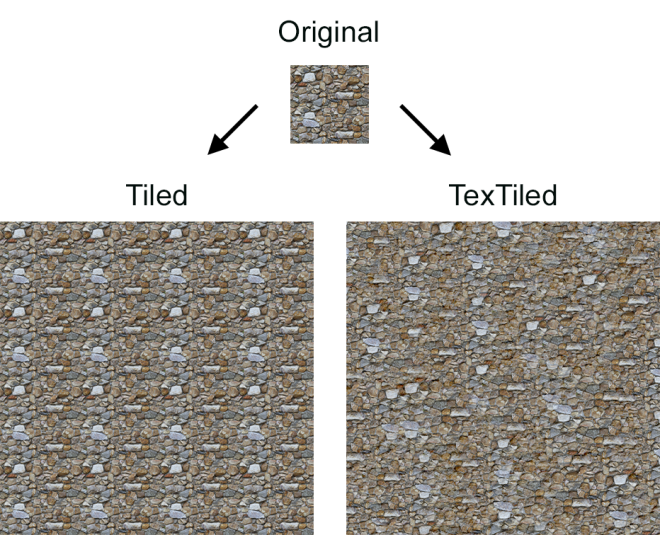

A quick little tool I threw together one evening as I was fascinated by how it worked! The original method for this was written up as a shader by Inigo Quillez, who if you’re not aware of, you should definitely check out! The basic concept is simple, avoid repetition in tiling textures by scattering the image using a voronoi pattern, and blending it together based on the voronoi cells. I made some modifications to allow control of contrast and scale, and it works beautifully. See for yourself:

Installing

Download from nukepedia.

Simply place the folder in your plugins directory and include the folder in the init.py using nuke.addPluginPath().

Code Breakdown

This is a surprisingly simple tool, less than 100 lines of code, so hopefully this won’t be too long-winded.

The premise is the same as creating cellular noise, except instead of drawing the gradient, we’ll draw in a texture, blending together the texture from each adjacent cell point weighted by it’s distance from it.

First, we work out what tile we’re currently in, and what uv space we’re in inside that tile:

float2 uv = float2(

(pos.x + 0.5f) / width,

(pos.y + 0.5f) / height

);

uv *= tiling;

float2 p = floor(uv);

float2 f = float2(fmod(uv.x, 1.0f), fmod(uv.y, 1.0f));

We fit the pixel position into our 0-1 UV space, and then multiply by the amount of tiling we want. We can then find what tile we’re in based on the integral part of our UVs (eg, 0.3 is tile 0; 1.8 is tile 1, etc…) and we can take the decimal to find our current uv space (0-1) for that tile.

Now, we do our voronoi calculation. We’re going to generate one point to focus our texture on in each tile, so we search each adjacent tile for the point it generates. We need to be able to generate the exact same point for each tile whenever we call it, so we use a hash function which generates pseudo-random numbers; meaning the result appears appear, but given the same input, will always give the same output.

float4 hash4(float2 p) {

float4 val = sin(float4(1.0f + dot(p, float2(37.0f, 17.0f)),

2.0f + dot(p, float2(11.0f, 47.0f)),

3.0f + dot(p, float2(41.0f, 29.0f)),

4.0f + dot(p, float2(23.0f, 31.0f)))) * 103.0f;

for (int i = 0; i < 4; i++)

val[i] = fmod(val[i], 1.0f);

return (val + 1) * 0.5f;

}

We loop over all 9 positions of adjacent cells (including our current one) and get the offset from our current tile (g), and calculate the pseudo-random offset (o) to get the position of the cell point in relation to our current pixel (r). The distance (d) of this offset can be calculated using dot product with itself (Note, this won’t be an accurate measurement, but rather as we’re only using this as a weight, it is a relative value to the other points, and much cheaper to calculate than the actual length). We want the texture to blend together rather than have sharp joints, so we apply a falloff (w).

for (int j = -1; j <= 1; j++)

for (int i = -1; i <= 1; i++)

{

// Create a voronoi cell point

float2 g = float2(float(i), float(j)); // Adjacent cell point

float4 o = hash4(p + g); // Random offset

float2 r = float2(g.x - f.x + o.x, g.y - f.y + o.y); // Resulting co-ordinate

float d = dot(r, r); // length of offset

float w = exp(-5.0f * d); // gaussian falloff

This gives us our blended distance weight from the center of the current cell point, but we also need to get the texture colour. To add more variation, this is where we can add scaling. Because we know the center point of our cell point, as long as each pixel scales out the same distance from it, we’ll get the result we want. We already know we’re generating the same values for each cell point from our hash function, so we can use one of these to set our scale per cell point, and apply our offset. Now we have x and y values for the pixel to sample, we just convert them to image resolution. However, these values may not be in the 0-1 space as they could wrap around the image, so we have to make sure we fit them into that range before converting to image space.

// Use the offset to pick a pseudo-random point to sample // Using r instead of uv offsets from center of cell, allowing us to scale each individually float randf = (o.x - 0.5f) * scale + 1.0f; float x = r.x * randf + o.z * offset; float y = r.y * randf + o.w * offset; // Wrap x and y around image and sample x = fmod(fmod(x, 1.0f) + 1.0f, 1.0f) * width; y = fmod(fmod(y, 1.0f) + 1.0f, 1.0f) * height; float4 c = bilinear(src, x, y);

Now we can add up the texture values and the weighted distance from each cell point, meaning when we divide by the total weight, we’ll get our averaged value.

// Accumulate weighted colour va += w * c; w1 += w; w2 += w * w;

This next part I admit, I don’t fully understand. It’s a contrast formula I found online and haven’t had the time to double check. If anyone wants to explain before I get around to updating this, please do!

float4 res = contrast + (va - w1 * contrast) / sqrt(w2); float4 col = mix(va / w1, res, offset);

And that’s all there is to it! We tiled the image, randomly offset each tile, checked the texture value that would be placed by each cell in our current pixel and weighted it based on how far it was from it’s cell point. Seamless textures!

Of course, this method does have it’s limitations, hard edges become an issue due to our smoothed blend, so distinct lines can look strange when caught in the blending areas. Similarly, patterns with parallel lines like bricks or tiles are unlikely to align again when scattered, so we don’t see the continuous pattern we’d like to. Perhaps there’s a good way to account for this? I have some ideas, but for now, enjoy tiling!

Wow! Thank you for sharing, Matthew! Especial gratitude for provided the source and the explanation!

LikeLike

You’re welcome! Thanks for taking the time to read it, I’m always very aware how long winded these can be.

LikeLike

Great !

This one helped me a lot.

Thank you.

Manoj.PC

LikeLike